Beyond traditional A/B Tests

Introduction

Experiments help make decisions But "decisions are influenced by those who show up"1

As Engineers, we all strive to build Products that Customers love. We want to add features that matter the most to Customers. Oftentimes, we resort to running experiments – trying out a variety of feature sets and then decide the version of the product that gets shipped to the larger audience.

It makes sense. Nobody wants to invest time in building things that are not going to add value to the Product. There are a lot of proven methods to plan, implement, and evaluate experiments. But the most popular/simple one is the "A/B test" or in some cases, extensions like "A/B/N" or "Multi Arm Bandits".

Problems with Short-lived A/B tests

If running a test and launching a product that customers love is so simple, why do Products fail?

Of course, a lot of it is context-driven [e.g. Cohort selection, questionable hypothesis, bad timing, other experiments].

But even if you manage to get everything right, these experiments don’t help measure long-term user behavior. And that’s exactly my gripe with short-lived A/B tests.

Core users show up. What about those who are not as engaged as the core users? If we base all our decisions based on those who showed up, we risk building a product for the users we already have instead of for the users who we want to have. How do you avoid this engagement bias?

I started searching online and landed on this interesting paper "Focusing on the Long-term: It’s good for Users and Business"2 where the authors have proposed an alternative to short A/B tests.

This post is a summary of my understanding of the paper.

Summary

[Excerpts from the paper]

Over the past few years, online experimentation has become a hot topic. Not only do companies run a lot of experiments, but they also contribute to the vast amount of literature on how to run large-scale experiments.

Several of those papers highlight the importance of developing an ‘OEC’ (Overall Evaluation Criteria) for online experiments. OECs in theory should include metrics that reflect an improvement in the long term rather than focusing on short-term gains. The authors talk about a specific experiment that determines which ads to show when users search on Google. "Optimizing which ads show based on short-term revenue is the obvious and easy thing to do, but may be detrimental in the long-term if the user experience is negatively impacted"

But how do you even measure long-term user impact? Most A/B tests are short-lived. There could be many experiments over a period of time and it will be difficult to attribute long-term metric changes to a particular experiment.

The authors also introduce a few new terms:

ads-sightedness – users start noticing ads

ads-blindness – users start to ignore ads

short-term impact – immediate result of the experiment

long-term impact – if all the users started receiving the treatment effect in perpetuity

learned impact – we see how users react to treatment during the experiment window and then after the experiment.

They identify Long-term revenue as their OEC defined by this equation.

Long-term Revenue = Users * (Tasks / User) * (Queries / Task) * (Ads / Query) * (Clicks / Ad) * (Cost / Click)

Showing more ads/query and optimizing for more clicks/ads might improve short-term metrics but if users start abandoning Google, it would definitely impact the long-term revenue.

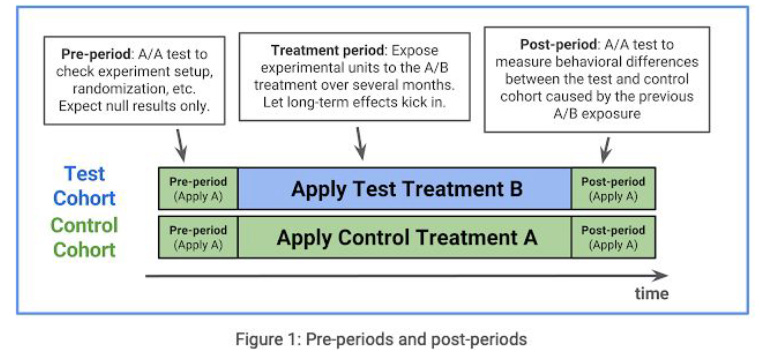

To address the problems with a naïve A/B test setup, the authors propose running two A/A experiments before and after the actual A/B test.

A `pre-period` to ensure that there are no statistically significant differences between the two Cohorts when the experiment starts.

A `post-period` where any behavioral differences due to user learning are measured. The authors argue that any differences in user behavior in the post-period will most likely be the learned effect. More like observing withdrawal symptoms. The Cohort that received the treatment version now goes back to be the control group.

As with any experiment setup, the results are available only at the end of the experiment. No intermediate results become available.

To fix this, the authors recommend running a parallel experiment (experiment 2) serving the same treatment B. Remember, the treatment group in experiment 1 also receives the same treatment B. Participants in the second experiment are re-randomized daily.

On any given day of the treatment period, experiment 1 serving treatment B and experiment 2 serving treatment B define a B/B test.

The authors argue that by running two experiments simultaneously, they were able to follow user behavioral changes while they were happening. The authors also share the results of their experiments which led them to make improvements to the adwords auction ranking function to account for the long-term impact of showing a particular ad. And the effect of ad-loads on smartphones.

Conclusion

[from the paper]

A recurring theme throughout this paper was to focus on the _long-term_ and identifying solid overall evaluation criteria.

We might be tempted to look at the result of a small experiment and start showing more ads on search pages. But it could have a negative long-term impact.

The paper discussed the pitfalls of a naive A/B test. Instead of running A/B tests in isolation, sandwiching the A/B test between two A/A tests was super interesting.

Further Reading/Things to Ponder

I would love to read more about long-term user effects. In one of my previous companies, the sales from the online site plateaued over a course of a week for no 'apparent' reason. The ability to correlate soft sales with an experiment/feature would have been handy.

It would be interesting to know if other teams within Google have followed this.

What’s the ideal duration for running experiments? How do we eliminate bias / truly randomize cohort selection? Ron Kohavi has some interesting ideas on running experiments at scale. Notes from his book deserve a dedicated post.

How to run experiments without stepping on each other's toes? Someone might be running an experiment to improve user engagement while another team might be running an experiment to monetize site pages *cough**cough*

References

ControlledExperiments.pdf

Linear Digressions Podcast

https://conferences.oreilly.com/strata/strata-ca-2018/cdn.oreillystatic.com/en/assets/1/event/269/Trapped%20by%20the%20present_%20Estimating%20long-term%20impact%20from%20A_B%20experiments%20Presentation.pdf

https://storage.googleapis.com/pub-tools-public-publication-data/pdf/43887.pdf